Financial Services

Safe AI Rollouts for Financial Services

Mountain Theory helps banks, capital markets firms, and asset managers securely deploy AI systems like Bloomberg GPT, ChatGPT, Claude, Microsoft Copilot, and proprietary trading and risk models while protecting customer data, capital, and regulatory standing.

AI adoption in financial services is accelerating across model risk, trading, fraud, and customer-facing workflows. Mountain Theory provides the operational control layer that addresses SR 11-7, SEC, FINRA, SOX, GLBA, PCI DSS, and autonomous AI risk.

The Shift Is Already Happening

AI Is Already Inside Financial Workflows

Banks, asset managers, and trading firms are rapidly adopting AI across model risk, trading, fraud detection, and customer-facing services.

The challenge is no longer whether AI will be adopted. The challenge is how to deploy it safely before governance gaps create financial, regulatory, and reputational risk.

Operational AI Governance

Protecting AI systems at the point where

decisions become actions.

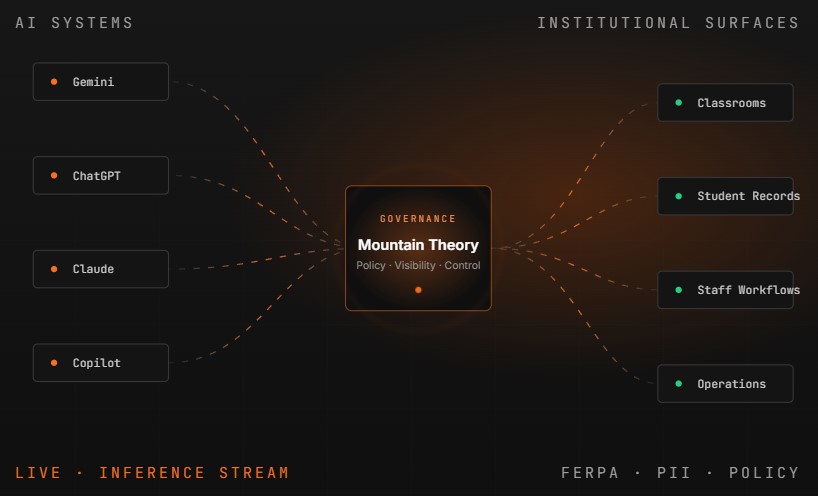

Traditional cybersecurity protects networks, identities, and infrastructure. Mountain Theory focuses on a different problem. Controlling unsafe AI behavior before it executes inside trading, risk, and customer workflows.

AI models generate decisions across trading, risk, fraud, and customer surfaces.

Mountain Theory evaluates intent, policy, sensitivity, and authorization in real time.

Allowed actions flow through. Unsafe actions are held or blocked with full audit.

The Shift Is Already Happening

Built for the

AI-Driven Financial Era

Financial institutions are rapidly standardizing around AI-powered trading, risk, fraud, and customer workflows. Mountain Theory adds governance and operational oversight as financial AI adoption expands.

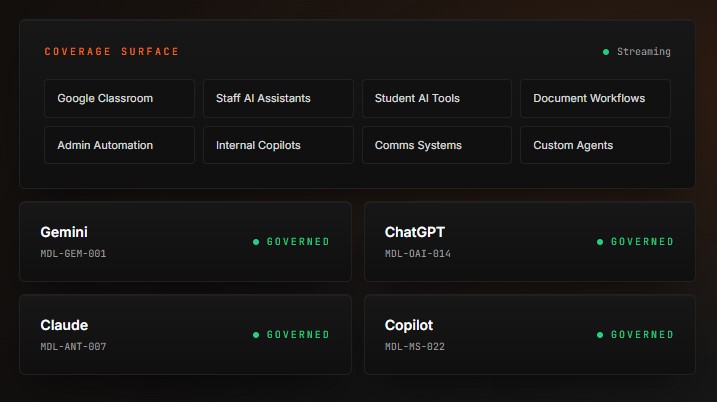

Model agnostic by design. The same control plane covers Bloomberg GPT, ChatGPT, Claude, Microsoft Copilot, and proprietary financial AI deployments.

Governance Frontier

The New

Governance Challenge

Four operational risks every financial institution should account for as AI moves into production.

REGULATORY & CUSTOMER DATA Exposure

AI systems increasingly interact with non-public personal information, customer financial data, and material non-public information.

Unapproved Financial AI

Trading desks, lines of business, and analysts are adopting AI tools faster than model risk and compliance policies evolve.

AI Actions Without Oversight

Autonomous workflows can execute trades, approvals, and customer actions faster than humans or compliance can intervene.

Lack of Operational Visibility

Many institutions cannot clearly see how AI is being used across desks, departments, and customer channels.

Design Partners

Built for Financial Institutions preparing for the

future of AI

Mountain Theory is exploring the future of runtime AI governance alongside forward-looking banks, capital markets firms, and asset managers focused on responsible adoption.

The financial institutions leading the next decade of finance will not simply adopt AI. They will operationalize it responsibly.

Design Partners

Prepare Your Institution For The

next phase of AI adoption.

Mountain Theory helps financial institutions move beyond experimentation toward governed, operational AI systems.